Online Safety Act Implementation: A Test of Ofcom's Commitment to Protecting Vulnerable Users Under New Leadership

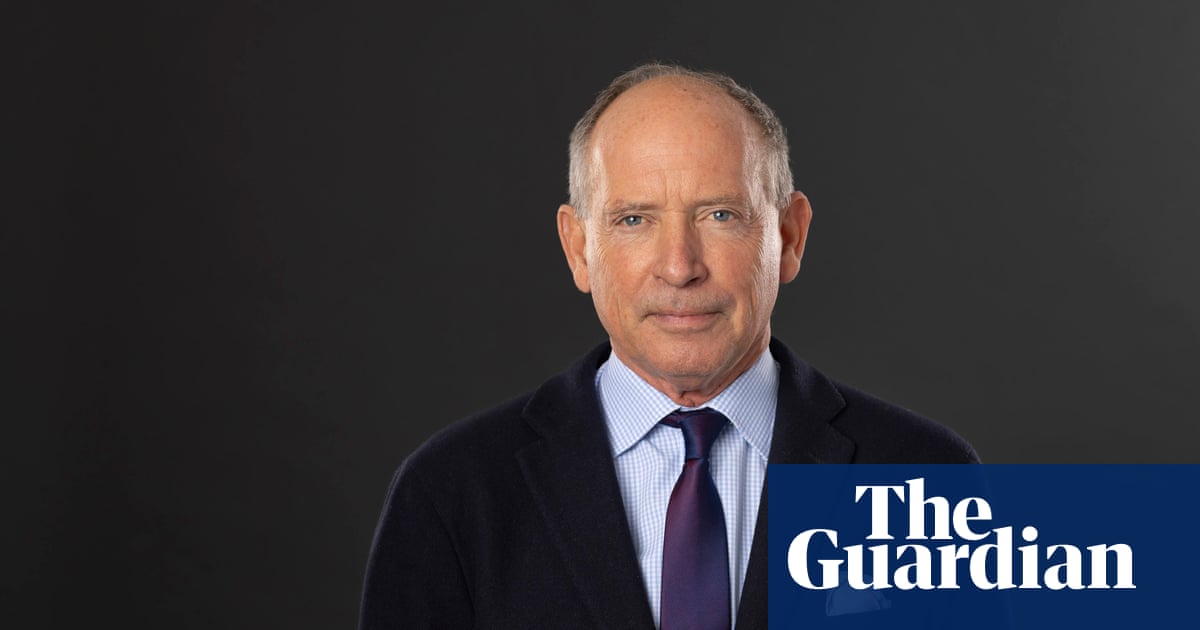

As Ian Cheshire takes the helm at Ofcom, advocates demand stronger action to safeguard children and marginalized communities from online harms.

Ian Cheshire's appointment as the head of Ofcom arrives at a crucial moment, as the organization struggles with the implementation of the Online Safety Act (OSA) and its potential to protect vulnerable populations from online harms. The act, intended to regulate social media and online platforms, faces criticism for not going far enough in addressing the systemic issues that allow harmful content to proliferate.

Ofcom's broad regulatory powers extend to telecommunications, broadcasting, and postal services, but its role in policing the online environment has become increasingly central to its mission. With the rise of social media and the proliferation of harmful content, the need for effective regulation is more urgent than ever, especially for children and marginalized groups who are disproportionately affected by online abuse and exploitation.

The Online Safety Act, passed in 2023, represents a significant step towards regulating online platforms. However, many argue that the act lacks the teeth necessary to hold these powerful corporations accountable. Advocates like Ian Russell, whose daughter Molly took her own life after viewing harmful online content, have called for stronger enforcement mechanisms and a more proactive approach to protecting children online.

Technology Secretary Liz Kendall's concern over delays in implementing the OSA reflects the growing pressure on Ofcom to demonstrate its commitment to online safety. While updating the act is not Ofcom's direct responsibility, the agency must actively engage with policymakers to ensure that the legislation is fit for purpose and effectively protects vulnerable users.

The initial steps in implementing the OSA, such as age-verification measures, are a welcome start, but much more needs to be done. Ofcom must prioritize the development of robust enforcement mechanisms, including the ability to quickly remove harmful content and hold platforms accountable for failing to protect their users.

Ofcom also has a responsibility to address the systemic inequalities that contribute to online harm. This includes tackling hate speech, disinformation, and other forms of online abuse that disproportionately target marginalized communities. The organization must work with civil society groups and experts to develop strategies that are both effective and equitable.

The government's desire for Ofcom to accelerate its efforts in online safety is understandable, given the urgent need to protect vulnerable users. However, it is crucial that the agency is given the resources and support it needs to effectively carry out its mandate. This includes investing in training for Ofcom staff, developing partnerships with civil society organizations, and working with international regulators to share best practices.